Fintech's AI moment: Eric Glyman in conversation with Reid Hoffman and Greylock

- The rise of the AI copilot

- Applying AI to finance and business operations

- Build guardrails around AI accuracy

- Choose between large language models vs fine-tuned models

- The impact that AI will have on regular people

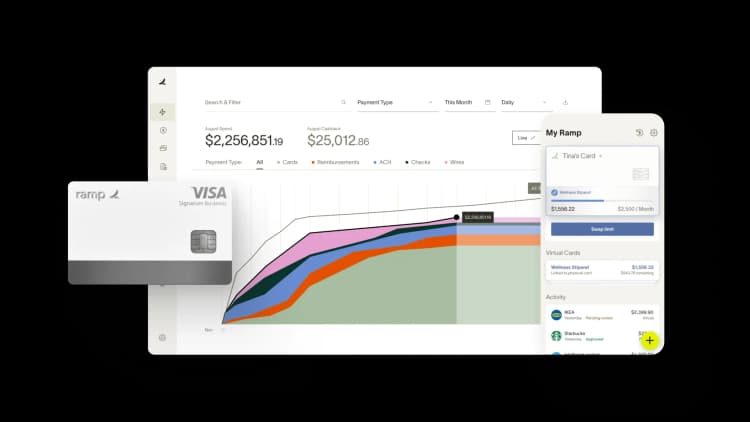

ChatGPT's burst onto the AI scene has made leaders in every industry re-examine their technology stack and rethink the applications of AI. At Ramp, we've been leading the charge on transforming finance through machine learning and finance AI from day one. Our CEO Eric Glyman recently sat down with Greylock's Seth Rosenberg and Reid Hoffman to discuss the tangible outcomes that AI can drive for organizations, especially within the finance function. Check out the video recording below:

Full transcript is available here. Short on time? Here are the key takeaways from Reid and Eric, lightly edited for clarity:

The rise of the AI copilot

Reid: "Every professional will have a copilot that is between useful and essential within 2 to 5 years. And we define professional as you process information and do something on it. That’s just from the large language models. In addition to amazing things from large language models, I think we will see other techniques [using] this kind of scale-compute to create things. We’ll see melds of them in various ways. You see some of that with Bing chat going 'Okay, we’ve got scale-compute and server which has truth and identity and a bunch of other stuff, along with large language models. And here’s what revolutionizes the search place.'"

Applying AI to finance and business operations

Eric: "[At Ramp] we’re focused on functionality, workflow and productivity related to movement of money...Within accounting, there are generally accepted accounting principles. These are rules for how transactions should be categorized and coded. What are the patterns and how can you learn from the 10,000-plus businesses, hundreds of thousands of folks automating both keeping of records, risk management and assessment to even go to market?...The way that Ramp has been able to grow so efficiently has come down to embracing AI - in our sales characteristics, lead routing, and mapping the like...There are probably ten core workstreams all throughout the business that are leveraging AI in some way, from pattern matching to generative use cases."

Build guardrails around AI accuracy

Reid: "Obviously, we worry about things like AI applied to credit decisioning. Do you have systematically biased data? One of the benefits [with AI] is that it becomes a scientific problem, which you can then work on and fix as opposed to the human judgment problem where you say, 'Well, we have that human judgment problem in how we’re allocating credit scores or credit worthiness or parole or everything else today.'"

Eric: "I do think the copilot model is a powerful one. One of our core customers is accountants. [An AI copilot could help them with] where they need to be going, pattern-matching, taking the learnings, bringing that back to augment and speed up...It’s really thinking about where AI needs to be accurate to a high degree. [For example] with risk and underwriting, you may not want AI making all the decisions...the copilot design pattern has been an emerging first way to deal with it."

Choose between large language models vs fine-tuned models

Eric: "For a variety of use cases, I wouldn’t bet against what’s happening in the mega models themselves for the vast amount of use cases, and powering more experienced generalized use cases using that. But as folks building businesses, there are a variety of other things I would be thinking about: is there proprietary data that’s involved in the workflow of your business? Is there a data effect? Is there some level of personalization? And as you run it through, does the experience get better for every customer? Specifically for finance, most of these mega models have been trained primarily [on] text or images, [or] in some cases code. I think that there are larger models being built on numbers, relationships, accounting. And so I think the answer will evolve over time. I think at the outset of the training set of the mega models, the functional answer today is train more locally. But be ready and think about if your stack is prepared to make a switch, and evaluate. I think that the core infrastructure and the way it could evolve is changing so rapidly. And so building in a way where you need to change things out is important in this style of architecture."

Reid: "If your theory of the game is a thin layer around the AI model, you’d better be playing on the trend of the large models. If that’s not your theory, then the small model or the self run model or whatever can itself go. But what happens is people go, 'Well, I just put a thin model layer around it.' Well, the large model is going to blow you out of the water almost every single time, unless you just happen to be of the thought that, ’I’m training to be the next large model’s back end.'"

The impact that AI will have on regular people

Eric: "When I think about most aspects of financial services, there’s an incredible amount of busy work that’s required, whether it’s in applications, in reviews, in submitting expense reports and doing accounting, or doing procurement and figuring out what things cost. And if you collapse the amount of work it takes in order to get at more data and understand what’s happening in the world and have the world’s knowledge given to you more rapidly, personalized to the problems you’re solving or have work done for you, it’s incredibly freeing. For us, one of the most common things that customers will say about Ramp is, 'I don’t think about expense reports anymore. I have time actually to do the interesting strategic work, not just to go and collect receipts in tag transactions.' And I think that’s a very small and early sign of things to come. And so in many ways, allowing people to be more strategic and focus on higher level work in more interesting and profound questions, I think that’s the potential."

At Ramp, we're working on turning AI in finance into a real tool that finance leaders can use on a day-to-day basis. Stay tuned—exciting updates are on the way!

“Browserbase builds infrastructure so AI agents can do real work. Ramp is doing the same for finance. It’s not another tool. It’s a system purpose-built for AI-driven finance, and that’s why we chose Ramp as our financial operating system from day one.”

Paul Klein IV

Founder & CEO, Browserbase

“We used to pay up to $20k a year for our AP platform. With Ramp, we’re earning back well over that amount. That's money that belongs to the mission now, not to the back-office software.”

Heidi Coffer

Chief Financial Officer, Boys & Girls Clubs of San Francisco

“The tricky thing about corporate travel policy is timing. We didn't need a stricter policy. We needed the policy to show up earlier. With Ramp Travel, it finally does.”

Keith Frantz

Director of Enterprise Risk Management, Prosper

“We're accountable to our funders, our partners, and the families we serve. That accountability starts with how we manage every dollar. Ramp makes it easy for our team to spend wisely, track in real time, and keep overhead low so more resources reach the families navigating infertility.”

Rachel Fruchtman

CFO, Jewish Fertility Foundation

“Each member of our team has an outsized impact due to our focus on using high-leverage tools like Ramp.”

Lauren Feeney

Controller, Perplexity

“With Ramp, we haven’t had to add accounting headcount to keep up with growth. The biggest takeaway is that instead of hiring our way through it, we fixed the workflow so we can keep supporting the organization as we scale.”

Melissa M.

VP of Accounting at Brandt Information Services

“In the public sector, every hour and every dollar belongs to the taxpayer. We can't afford to waste either. Ramp ensures we don't.”

Carly Ching

Finance Specialist, City of Ketchum

“Compared to our previous vendor, Ramp gave us true transaction-level granularity, making it possible for me to audit thousands of transactions in record time.”

Lisa Norris

Director of Compliance & Privacy Officer, ABB Optical